Search Results for author: Christopher Clark

Found 17 papers, 12 papers with code

Unified-IO 2: Scaling Autoregressive Multimodal Models with Vision, Language, Audio, and Action

1 code implementation • 28 Dec 2023 • Jiasen Lu, Christopher Clark, Sangho Lee, Zichen Zhang, Savya Khosla, Ryan Marten, Derek Hoiem, Aniruddha Kembhavi

We present Unified-IO 2, the first autoregressive multimodal model that is capable of understanding and generating image, text, audio, and action.

Holodeck: Language Guided Generation of 3D Embodied AI Environments

1 code implementation • 14 Dec 2023 • Yue Yang, Fan-Yun Sun, Luca Weihs, Eli VanderBilt, Alvaro Herrasti, Winson Han, Jiajun Wu, Nick Haber, Ranjay Krishna, Lingjie Liu, Chris Callison-Burch, Mark Yatskar, Aniruddha Kembhavi, Christopher Clark

3D simulated environments play a critical role in Embodied AI, but their creation requires expertise and extensive manual effort, restricting their diversity and scope.

Exposing and Addressing Cross-Task Inconsistency in Unified Vision-Language Models

1 code implementation • 28 Mar 2023 • Adyasha Maharana, Amita Kamath, Christopher Clark, Mohit Bansal, Aniruddha Kembhavi

As general purpose vision models get increasingly effective at a wide set of tasks, it is imperative that they be consistent across the tasks they support.

I Can't Believe There's No Images! Learning Visual Tasks Using only Language Supervision

1 code implementation • ICCV 2023 • Sophia Gu, Christopher Clark, Aniruddha Kembhavi

We produce models using only text training data on four representative tasks: image captioning, visual entailment, visual question answering and visual news captioning, and evaluate them on standard benchmarks using images.

Unified-IO: A Unified Model for Vision, Language, and Multi-Modal Tasks

no code implementations • 17 Jun 2022 • Jiasen Lu, Christopher Clark, Rowan Zellers, Roozbeh Mottaghi, Aniruddha Kembhavi

We propose Unified-IO, a model that performs a large variety of AI tasks spanning classical computer vision tasks, including pose estimation, object detection, depth estimation and image generation, vision-and-language tasks such as region captioning and referring expression, to natural language processing tasks such as question answering and paraphrasing.

Ranked #1 on

Object Segmentation

on GRIT

Ranked #1 on

Object Segmentation

on GRIT

A-OKVQA: A Benchmark for Visual Question Answering using World Knowledge

1 code implementation • 3 Jun 2022 • Dustin Schwenk, Apoorv Khandelwal, Christopher Clark, Kenneth Marino, Roozbeh Mottaghi

In contrast to the existing knowledge-based VQA datasets, the questions generally cannot be answered by simply querying a knowledge base, and instead require some form of commonsense reasoning about the scene depicted in the image.

Webly Supervised Concept Expansion for General Purpose Vision Models

no code implementations • 4 Feb 2022 • Amita Kamath, Christopher Clark, Tanmay Gupta, Eric Kolve, Derek Hoiem, Aniruddha Kembhavi

This work presents an effective and inexpensive alternative: learn skills from supervised datasets, learn concepts from web image search, and leverage a key characteristic of GPVs: the ability to transfer visual knowledge across skills.

Ranked #2 on

Visual Question Answering (VQA)

on GRIT

Ranked #2 on

Visual Question Answering (VQA)

on GRIT

Iconary: A Pictionary-Based Game for Testing Multimodal Communication with Drawings and Text

1 code implementation • EMNLP 2021 • Christopher Clark, Jordi Salvador, Dustin Schwenk, Derrick Bonafilia, Mark Yatskar, Eric Kolve, Alvaro Herrasti, Jonghyun Choi, Sachin Mehta, Sam Skjonsberg, Carissa Schoenick, Aaron Sarnat, Hannaneh Hajishirzi, Aniruddha Kembhavi, Oren Etzioni, Ali Farhadi

We investigate these challenges in the context of Iconary, a collaborative game of drawing and guessing based on Pictionary, that poses a novel challenge for the research community.

Learning to Model and Ignore Dataset Bias with Mixed Capacity Ensembles

1 code implementation • Findings of the Association for Computational Linguistics 2020 • Christopher Clark, Mark Yatskar, Luke Zettlemoyer

We evaluate performance on synthetic datasets, and four datasets built to penalize models that exploit known biases on textual entailment, visual question answering, and image recognition tasks.

Long-distance Detection of Bioacoustic Events with Per-channel Energy Normalization

no code implementations • 1 Nov 2019 • Vincent Lostanlen, Kaitlin Palmer, Elly Knight, Christopher Clark, Holger Klinck, Andrew Farnsworth, Tina Wong, Jason Cramer, Juan Pablo Bello

This paper proposes to perform unsupervised detection of bioacoustic events by pooling the magnitudes of spectrogram frames after per-channel energy normalization (PCEN).

Don't Take the Easy Way Out: Ensemble Based Methods for Avoiding Known Dataset Biases

3 code implementations • IJCNLP 2019 • Christopher Clark, Mark Yatskar, Luke Zettlemoyer

Our method has two stages: we (1) train a naive model that makes predictions exclusively based on dataset biases, and (2) train a robust model as part of an ensemble with the naive one in order to encourage it to focus on other patterns in the data that are more likely to generalize.

Ranked #5 on

Visual Question Answering (VQA)

on VQA-CP

Ranked #5 on

Visual Question Answering (VQA)

on VQA-CP

BoolQ: Exploring the Surprising Difficulty of Natural Yes/No Questions

1 code implementation • NAACL 2019 • Christopher Clark, Kenton Lee, Ming-Wei Chang, Tom Kwiatkowski, Michael Collins, Kristina Toutanova

In this paper we study yes/no questions that are naturally occurring --- meaning that they are generated in unprompted and unconstrained settings.

Ranked #26 on

Question Answering

on BoolQ

Ranked #26 on

Question Answering

on BoolQ

Deep contextualized word representations

46 code implementations • NAACL 2018 • Matthew E. Peters, Mark Neumann, Mohit Iyyer, Matt Gardner, Christopher Clark, Kenton Lee, Luke Zettlemoyer

We introduce a new type of deep contextualized word representation that models both (1) complex characteristics of word use (e. g., syntax and semantics), and (2) how these uses vary across linguistic contexts (i. e., to model polysemy).

Ranked #3 on

Only Connect Walls Dataset Task 1 (Grouping)

on OCW

(Wasserstein Distance (WD) metric, using extra

training data)

Ranked #3 on

Only Connect Walls Dataset Task 1 (Grouping)

on OCW

(Wasserstein Distance (WD) metric, using extra

training data)

Citation Intent Classification

Citation Intent Classification

Conversational Response Selection

+8

Conversational Response Selection

+8

Simple and Effective Multi-Paragraph Reading Comprehension

1 code implementation • ACL 2018 • Christopher Clark, Matt Gardner

We consider the problem of adapting neural paragraph-level question answering models to the case where entire documents are given as input.

Ranked #28 on

Question Answering

on TriviaQA

(using extra training data)

Ranked #28 on

Question Answering

on TriviaQA

(using extra training data)

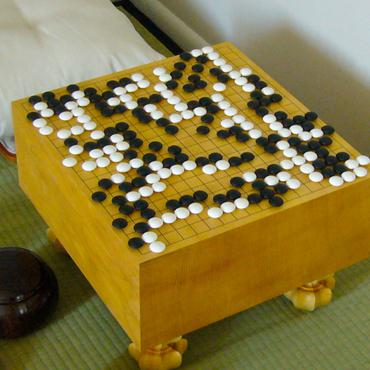

Teaching Deep Convolutional Neural Networks to Play Go

1 code implementation • 10 Dec 2014 • Christopher Clark, Amos Storkey

Our final networks are able to achieve move prediction accuracies of 41. 1% and 44. 4% on two different Go datasets, surpassing previous state of the art on this task by significant margins.

Classification for Big Dataset of Bioacoustic Signals Based on Human Scoring System and Artificial Neural Network

no code implementations • 15 May 2013 • Mohammad Pourhomayoun, Peter Dugan, Marian Popescu, Denise Risch, Hal Lewis, Christopher Clark

In this paper, we propose a method to improve sound classification performance by combining signal features, derived from the time-frequency spectrogram, with human perception.

Bioacoustic Signal Classification Based on Continuous Region Processing, Grid Masking and Artificial Neural Network

no code implementations • 15 May 2013 • Mohammad Pourhomayoun, Peter Dugan, Marian Popescu, Christopher Clark

In this paper, we develop a novel method based on machine-learning and image processing to identify North Atlantic right whale (NARW) up-calls in the presence of high levels of ambient and interfering noise.