Search Results for author: Jie Hu

Found 83 papers, 30 papers with code

Collaboration of Teachers for Semi-supervised Object Detection

no code implementations • 22 May 2024 • Liyu Chen, Huaao Tang, Yi Wen, Hanting Chen, Wei Li, Junchao Liu, Jie Hu

To address these issues, we propose the Collaboration of Teachers Framework (CTF), which consists of multiple pairs of teacher and student models for training.

Determining cell population size from cell fraction in cell plasticity models

no code implementations • 7 May 2024 • Yuman Wang, Shuli Chen, Jie Hu, Da Zhou

In response to this challenge, we present two computational approaches grounded in stochastic cell population models: the first-order moment method (FOM) and the second-order moment method (SOM).

U-DiTs: Downsample Tokens in U-Shaped Diffusion Transformers

1 code implementation • 4 May 2024 • Yuchuan Tian, Zhijun Tu, Hanting Chen, Jie Hu, Chao Xu, Yunhe Wang

Diffusion Transformers (DiTs) introduce the transformer architecture to diffusion tasks for latent-space image generation.

AnomalyXFusion: Multi-modal Anomaly Synthesis with Diffusion

1 code implementation • 30 Apr 2024 • Jie Hu, Yawen Huang, Yilin Lu, Guoyang Xie, Guannan Jiang, Yefeng Zheng, Zhichao Lu

The AnomalyXFusion framework comprises two distinct yet synergistic modules: the Multi-modal In-Fusion (MIF) module and the Dynamic Dif-Fusion (DDF) module.

Rethinking 3D Dense Caption and Visual Grounding in A Unified Framework through Prompt-based Localization

no code implementations • 17 Apr 2024 • Yongdong Luo, Haojia Lin, Xiawu Zheng, Yigeng Jiang, Fei Chao, Jie Hu, Guannan Jiang, Songan Zhang, Rongrong Ji

3D Visual Grounding (3DVG) and 3D Dense Captioning (3DDC) are two crucial tasks in various 3D applications, which require both shared and complementary information in localization and visual-language relationships.

LIPT: Latency-aware Image Processing Transformer

no code implementations • 9 Apr 2024 • Junbo Qiao, Wei Li, Haizhen Xie, Hanting Chen, Yunshuai Zhou, Zhijun Tu, Jie Hu, Shaohui Lin

Extensive experiments on multiple image processing tasks (e. g., image super-resolution (SR), JPEG artifact reduction, and image denoising) demonstrate the superiority of LIPT on both latency and PSNR.

Knowledge Distillation with Multi-granularity Mixture of Priors for Image Super-Resolution

no code implementations • 3 Apr 2024 • Simiao Li, Yun Zhang, Wei Li, Hanting Chen, Wenjia Wang, BingYi Jing, Shaohui Lin, Jie Hu

Knowledge distillation (KD) is a promising yet challenging model compression technique that transfers rich learning representations from a well-performing but cumbersome teacher model to a compact student model.

IPT-V2: Efficient Image Processing Transformer using Hierarchical Attentions

no code implementations • 31 Mar 2024 • Zhijun Tu, Kunpeng Du, Hanting Chen, Hailing Wang, Wei Li, Jie Hu, Yunhe Wang

Recent advances have demonstrated the powerful capability of transformer architecture in image restoration.

Distilling Semantic Priors from SAM to Efficient Image Restoration Models

no code implementations • 25 Mar 2024 • Quan Zhang, Xiaoyu Liu, Wei Li, Hanting Chen, Junchao Liu, Jie Hu, Zhiwei Xiong, Chun Yuan, Yunhe Wang

SPD leverages a self-distillation manner to distill the fused semantic priors to boost the performance of original IR models.

Proxy-RLHF: Decoupling Generation and Alignment in Large Language Model with Proxy

no code implementations • 7 Mar 2024 • Yu Zhu, Chuxiong Sun, Wenfei Yang, Wenqiang Wei, Bo Tang, Tianzhu Zhang, Zhiyu Li, Shifeng Zhang, Feiyu Xiong, Jie Hu, MingChuan Yang

Reinforcement Learning from Human Feedback (RLHF) is the prevailing approach to ensure Large Language Models (LLMs) align with human values.

Self-Supervised Representation Learning with Meta Comprehensive Regularization

no code implementations • 3 Mar 2024 • Huijie Guo, Ying Ba, Jie Hu, Lingyu Si, Wenwen Qiang, Lei Shi

Specifically, we update our proposed model through a bi-level optimization mechanism, enabling it to capture comprehensive features.

Accelerating Distributed Stochastic Optimization via Self-Repellent Random Walks

no code implementations • 18 Jan 2024 • Jie Hu, Vishwaraj Doshi, Do Young Eun

We study a family of distributed stochastic optimization algorithms where gradients are sampled by a token traversing a network of agents in random-walk fashion.

Central Limit Theorem for Two-Timescale Stochastic Approximation with Markovian Noise: Theory and Applications

no code implementations • 17 Jan 2024 • Jie Hu, Vishwaraj Doshi, Do Young Eun

Two-timescale stochastic approximation (TTSA) is among the most general frameworks for iterative stochastic algorithms.

Rethinking Dimensional Rationale in Graph Contrastive Learning from Causal Perspective

1 code implementation • 16 Dec 2023 • Qirui Ji, Jiangmeng Li, Jie Hu, Rui Wang, Changwen Zheng, Fanjiang Xu

To this end, with the purpose of exploring the intrinsic rationale of graphs, we accordingly propose to capture the dimensional rationale from graphs, which has not received sufficient attention in the literature.

CBQ: Cross-Block Quantization for Large Language Models

no code implementations • 13 Dec 2023 • Xin Ding, Xiaoyu Liu, Zhijun Tu, Yun Zhang, Wei Li, Jie Hu, Hanting Chen, Yehui Tang, Zhiwei Xiong, Baoqun Yin, Yunhe Wang

Post-training quantization (PTQ) has played a key role in compressing large language models (LLMs) with ultra-low costs.

GenDet: Towards Good Generalizations for AI-Generated Image Detection

1 code implementation • 12 Dec 2023 • Mingjian Zhu, Hanting Chen, Mouxiao Huang, Wei Li, Hailin Hu, Jie Hu, Yunhe Wang

The misuse of AI imagery can have harmful societal effects, prompting the creation of detectors to combat issues like the spread of fake news.

Massive Wireless Energy Transfer without Channel State Information via Imperfect Intelligent Reflecting Surfaces

no code implementations • 15 Nov 2023 • Cheng Luo, Jie Hu, Luping Xiang, Kun Yang, Kai-Kit Wong

Intelligent Reflecting Surface (IRS) utilizes low-cost, passive reflecting elements to enhance the passive beam gain, improve Wireless Energy Transfer (WET) efficiency, and enable its deployment for numerous Internet of Things (IoT) devices.

Learning-Based Biharmonic Augmentation for Point Cloud Classification

no code implementations • 10 Nov 2023 • Jiacheng Wei, Guosheng Lin, Henghui Ding, Jie Hu, Kim-Hui Yap

Point cloud datasets often suffer from inadequate sample sizes in comparison to image datasets, making data augmentation challenging.

Spatio-Temporal Meta Contrastive Learning

1 code implementation • 26 Oct 2023 • Jiabin Tang, Lianghao Xia, Jie Hu, Chao Huang

Although recent STGNN models with contrastive learning aim to address these challenges, most of them use pre-defined augmentation strategies that heavily depend on manual design and cannot be customized for different Spatio-Temporal Graph (STG) scenarios.

Robust NOMA-assisted OTFS-ISAC Network Design with 3D Motion Prediction Topology

no code implementations • 21 Oct 2023 • Luping Xiang, Ke Xu, Jie Hu, Christos Masouros, Kun Yang

This paper proposes a novel non-orthogonal multiple access (NOMA)-assisted orthogonal time-frequency space (OTFS)-integrated sensing and communication (ISAC) network, which uses unmanned aerial vehicles (UAVs) as air base stations to support multiple users.

Green Beamforming Design for Integrated Sensing and Communication Systems: A Practical Approach Using Beam-Matching Error Metrics

no code implementations • 21 Oct 2023 • Luping Xiang, Ke Xu, Jie Hu, Kun Yang

In this paper, we propose a green beamforming design for the integrated sensing and communication (ISAC) system, using beam-matching error to assess radar performance.

IFT: Image Fusion Transformer for Ghost-free High Dynamic Range Imaging

no code implementations • 26 Sep 2023 • Hailing Wang, Wei Li, Yuanyuan Xi, Jie Hu, Hanting Chen, Longyu Li, Yunhe Wang

By matching similar patches between frames, objects with large motion ranges in dynamic scenes can be aligned, which can effectively alleviate the generation of artifacts.

Data Upcycling Knowledge Distillation for Image Super-Resolution

1 code implementation • 25 Sep 2023 • Yun Zhang, Wei Li, Simiao Li, Hanting Chen, Zhijun Tu, Wenjia Wang, BingYi Jing, Shaohui Lin, Jie Hu

Knowledge distillation (KD) compresses deep neural networks by transferring task-related knowledge from cumbersome pre-trained teacher models to compact student models.

Ranked #22 on

Image Super-Resolution

on Urban100 - 4x upscaling

Ranked #22 on

Image Super-Resolution

on Urban100 - 4x upscaling

USL-Net: Uncertainty Self-Learning Network for Unsupervised Skin Lesion Segmentation

no code implementations • 23 Sep 2023 • Xiaofan Li, Bo Peng, Jie Hu, Changyou Ma, DaiPeng Yang, Zhuyang Xie

Rather than risk potential pseudo-labeling errors or learning confusion by forcefully classifying these regions, we consider them as uncertainty regions, exempting them from pseudo-labeling and allowing the network to self-learn.

Pseudo-label Alignment for Semi-supervised Instance Segmentation

1 code implementation • ICCV 2023 • Jie Hu, Chen Chen, Liujuan Cao, Shengchuan Zhang, Annan Shu, Guannan Jiang, Rongrong Ji

Through extensive experiments conducted on the COCO and Cityscapes datasets, we demonstrate that PAIS is a promising framework for semi-supervised instance segmentation, particularly in cases where labeled data is severely limited.

A Pre-trained Data Deduplication Model based on Active Learning

no code implementations • 31 Jul 2023 • Xinyao Liu, Shengdong Du, Fengmao Lv, Hongtao Xue, Jie Hu, Tianrui Li

In the era of big data, the issue of data quality has become increasingly prominent.

Persistent Ballistic Entanglement Spreading with Optimal Control in Quantum Spin Chains

no code implementations • 21 Jul 2023 • Ying Lu, Pei Shi, Xiao-Han Wang, Jie Hu, Shi-Ju Ran

In this work, we uncover that the ``variational entanglement-enhancing'' field (VEEF) robustly induces a persistent ballistic spreading of entanglement in quantum spin chains.

Augmenting Greybox Fuzzing with Generative AI

no code implementations • 11 Jun 2023 • Jie Hu, Qian Zhang, Heng Yin

Large language models (LLM) pre-trained with an enormous amount of natural language corpus have proved to be effective for understanding the implicit format syntax and generating format-conforming inputs.

Self-Repellent Random Walks on General Graphs -- Achieving Minimal Sampling Variance via Nonlinear Markov Chains

no code implementations • 8 May 2023 • Vishwaraj Doshi, Jie Hu, Do Young Eun

We consider random walks on discrete state spaces, such as general undirected graphs, where the random walkers are designed to approximate a target quantity over the network topology via sampling and neighborhood exploration in the form of Markov chain Monte Carlo (MCMC) procedures.

You Only Segment Once: Towards Real-Time Panoptic Segmentation

2 code implementations • CVPR 2023 • Jie Hu, Linyan Huang, Tianhe Ren, Shengchuan Zhang, Rongrong Ji, Liujuan Cao

To reduce the computational overhead, we design a feature pyramid aggregator for the feature map extraction, and a separable dynamic decoder for the panoptic kernel generation.

Bag of Tricks with Quantized Convolutional Neural Networks for image classification

no code implementations • 13 Mar 2023 • Jie Hu, Mengze Zeng, Enhua Wu

To bridge this gap, we collect and improve existing quantization methods and propose a gold guideline for post-training quantization.

Intriguing Property and Counterfactual Explanation of GAN for Remote Sensing Image Generation

1 code implementation • 9 Mar 2023 • Xingzhe Su, Wenwen Qiang, Jie Hu, Fengge Wu, Changwen Zheng, Fuchun Sun

Based on this SCM, we theoretically prove that the quality of generated images is positively correlated with the amount of feature information.

DistilPose: Tokenized Pose Regression with Heatmap Distillation

1 code implementation • CVPR 2023 • Suhang Ye, Yingyi Zhang, Jie Hu, Liujuan Cao, Shengchuan Zhang, Lei Shen, Jun Wang, Shouhong Ding, Rongrong Ji

Specifically, DistilPose maximizes the transfer of knowledge from the teacher model (heatmap-based) to the student model (regression-based) through Token-distilling Encoder (TDE) and Simulated Heatmaps.

Orthogonal-Time-Frequency-Space Signal Design for Integrated Data and Energy Transfer: Benefits from Doppler Offsets

no code implementations • 3 Feb 2023 • Jie Hu, Ke Xu, Luping Xiang, Kun Yang

Integrated data and energy transfer (IDET) is an advanced technology for enabling energy sustainability for massively deployed low-power electronic consumption components.

Elastic Aggregation for Federated Optimization

1 code implementation • CVPR 2023 • Dengsheng Chen, Jie Hu, Vince Junkai Tan, Xiaoming Wei, Enhua Wu

Federated learning enables the privacy-preserving training of neural network models using real-world data across distributed clients.

MSRA-SR: Image Super-resolution Transformer with Multi-scale Shared Representation Acquisition

no code implementations • ICCV 2023 • Xiaoqiang Zhou, Huaibo Huang, Ran He, Zilei Wang, Jie Hu, Tieniu Tan

In particular, self-attention with cross-scale matching and convolution filters with different kernel sizes are designed to exploit the multi-scale features in images.

Toward Accurate Post-Training Quantization for Image Super Resolution

2 code implementations • CVPR 2023 • Zhijun Tu, Jie Hu, Hanting Chen, Yunhe Wang

In this paper, we study post-training quantization(PTQ) for image super resolution using only a few unlabeled calibration images.

RefSR-NeRF: Towards High Fidelity and Super Resolution View Synthesis

1 code implementation • CVPR 2023 • Xudong Huang, Wei Li, Jie Hu, Hanting Chen, Yunhe Wang

We present Reference-guided Super-Resolution Neural Radiance Field (RefSR-NeRF) that extends NeRF to super resolution and photorealistic novel view synthesis.

DCS-RISR: Dynamic Channel Splitting for Efficient Real-world Image Super-Resolution

no code implementations • 15 Dec 2022 • Junbo Qiao, Shaohui Lin, Yunlun Zhang, Wei Li, Jie Hu, Gaoqi He, Changbo Wang, Lizhuang Ma

Real-world image super-resolution (RISR) has received increased focus for improving the quality of SR images under unknown complex degradation.

SISO-OFDM and MISO-OFDM Counterparts for "Wideband Waveforming for Integrated Data and Energy Transfer: Creating Extra Gain Beyond Multiple Antennas and Multiple Carriers"

no code implementations • 8 Dec 2022 • Zhonglun Wang, Jie Hu, Kun Yang

In this article, we proposethe SISO-OFDM and MISO-OFDM based IDET systems, which are the counterparts of our optimal wideband waveforming strategy in [1].

Prediction of superconducting properties of materials based on machine learning models

no code implementations • 6 Nov 2022 • Jie Hu, Yongquan Jiang, Yang Yan, Houchen Zuo

Based on this, this manuscript proposes the use of XGBoost model to identify superconductors; the first application of deep forest model to predict the critical temperature of superconductors; the first application of deep forest to predict the band gap of materials; and application of a new sub-network model to predict the Fermi energy level of materials.

Rethinking skip connection model as a learnable Markov chain

1 code implementation • 30 Sep 2022 • Dengsheng Chen, Jie Hu, Wenwen Qiang, Xiaoming Wei, Enhua Wu

In this work, we deep dive into the model's behaviors with skip connections which can be formulated as a learnable Markov chain.

Bi-SIS Epidemics on Graphs -- Quantitative Analysis of Coexistence Equilibria

no code implementations • 15 Sep 2022 • Vishwaraj Doshi, Jie Hu, Do Young Eun

We consider a system in which two viruses of the Susceptible-Infected-Susceptible (SIS) type compete over general, overlaid graphs.

Efficiency Ordering of Stochastic Gradient Descent

no code implementations • 15 Sep 2022 • Jie Hu, Vishwaraj Doshi, Do Young Eun

We consider the stochastic gradient descent (SGD) algorithm driven by a general stochastic sequence, including i. i. d noise and random walk on an arbitrary graph, among others; and analyze it in the asymptotic sense.

Deep Machine Learning Reconstructing Lattice Topology with Strong Thermal Fluctuations

no code implementations • 8 Aug 2022 • Xiao-Han Wang, Pei Shi, Bin Xi, Jie Hu, Shi-Ju Ran

In this work, we demonstrate the validity of the deep convolutional neural network (CNN) on reconstructing the lattice topology (i. e., spin connectivities) in the presence of strong thermal fluctuations and unbalanced data.

DRAformer: Differentially Reconstructed Attention Transformer for Time-Series Forecasting

no code implementations • 11 Jun 2022 • Benhan Li, Shengdong Du, Tianrui Li, Jie Hu, Zhen Jia

Time-series forecasting plays an important role in many real-world scenarios, such as equipment life cycle forecasting, weather forecasting, and traffic flow forecasting.

Nearest Neighbor Classifier with Margin Penalty for Active Learning

1 code implementation • 17 Mar 2022 • Yuan Cao, Zhiqiao Gao, Jie Hu, MingChuan Yang, Jinpeng Chen

As a result, informative samples in the margin area can not be discovered and AL performance are damaged.

Spatio-Temporal Latent Graph Structure Learning for Traffic Forecasting

no code implementations • 25 Feb 2022 • Jiabin Tang, Tang Qian, Shijing Liu, Shengdong Du, Jie Hu, Tianrui Li

Accurate traffic forecasting, the foundation of intelligent transportation systems (ITS), has never been more significant than nowadays due to the prosperity of smart cities and urban computing.

Elastic-Link for Binarized Neural Network

no code implementations • 19 Dec 2021 • Jie Hu, Ziheng Wu, Vince Tan, Zhilin Lu, Mengze Zeng, Enhua Wu

For example, we raise the top-1 accuracy of binarized ResNet26 from 57. 9% to 64. 0%.

Patent Data for Engineering Design: A Critical Review and Future Directions

no code implementations • 15 Nov 2021 • Shuo Jiang, Serhad Sarica, Binyang Song, Jie Hu, Jianxi Luo

Patent data have long been used for engineering design research because of its large and expanding size, and widely varying massive amount of design information contained in patents.

GIR Framework: Learning Graph Positional Embeddings with Anchor Indication and Path Encoding

no code implementations • 29 Sep 2021 • Yuheng Lu, Jinpeng Chen, Chuxiong Sun, Jie Hu

In this work, we propose a novel framework which follows the anchor-based idea and aims at conveying distance information implicitly along the MPNN message passing steps for encoding position information, node attributes, and graph structure in a more flexible way.

Domain-Invariant Representation Learning with Global and Local Consistency

no code implementations • 29 Sep 2021 • Wenwen Qiang, Jiangmeng Li, Jie Hu, Bing Su, Changwen Zheng, Hui Xiong

In this paper, we give an analysis of the existing representation learning framework of unsupervised domain adaptation and show that the learned feature representations of the source domain samples are with discriminability, compressibility, and transferability.

Prediction of properties of metal alloy materials based on machine learning

no code implementations • 20 Sep 2021 • Houchen Zuo, Yongquan Jiang, Yan Yang, Jie Hu

The experimental results show that the machine learning can predict the material properties accurately.

LODE: Deep Local Deblurring and A New Benchmark

1 code implementation • 19 Sep 2021 • Zerun Wang, Liuyu Xiang, Fan Yang, Jinzhao Qian, Jie Hu, Haidong Huang, Jungong Han, Yuchen Guo, Guiguang Ding

While recent deep deblurring algorithms have achieved remarkable progress, most existing methods focus on the global deblurring problem, where the image blur mostly arises from severe camera shake.

OpenFed: A Comprehensive and Versatile Open-Source Federated Learning Framework

1 code implementation • 16 Sep 2021 • Dengsheng Chen, Vince Tan, Zhilin Lu, Jie Hu

Federated Learning alleviates these problems by decentralizing model training, thereby removing the need for data transfer and aggregation.

Information Theory-Guided Heuristic Progressive Multi-View Coding

no code implementations • 6 Sep 2021 • Jiangmeng Li, Wenwen Qiang, Hang Gao, Bing Su, Farid Razzak, Jie Hu, Changwen Zheng, Hui Xiong

To this end, we rethink the existing multi-view learning paradigm from the information theoretical perspective and then propose a novel information theoretical framework for generalized multi-view learning.

Unifying Nonlocal Blocks for Neural Networks

1 code implementation • ICCV 2021 • Lei Zhu, Qi She, Duo Li, Yanye Lu, Xuejing Kang, Jie Hu, Changhu Wang

The nonlocal-based blocks are designed for capturing long-range spatial-temporal dependencies in computer vision tasks.

Deep Learning for Technical Document Classification

no code implementations • 27 Jun 2021 • Shuo Jiang, Jie Hu, Christopher L. Magee, Jianxi Luo

In large technology companies, the requirements for managing and organizing technical documents created by engineers and managers have increased dramatically in recent years, which has led to a higher demand for more scalable, accurate, and automated document classification.

Predicting Quantum Potentials by Deep Neural Network and Metropolis Sampling

no code implementations • 6 Jun 2021 • Rui Hong, Peng-Fei Zhou, Bin Xi, Jie Hu, An-Chun Ji, Shi-Ju Ran

The hybridizations of machine learning and quantum physics have caused essential impacts to the methodology in both fields.

Data-Driven Design-by-Analogy: State of the Art and Future Directions

no code implementations • 3 Jun 2021 • Shuo Jiang, Jie Hu, Kristin L. Wood, Jianxi Luo

Design-by-Analogy (DbA) is a design methodology wherein new solutions, opportunities or designs are generated in a target domain based on inspiration drawn from a source domain; it can benefit designers in mitigating design fixation and improving design ideation outcomes.

Graph Inference Representation: Learning Graph Positional Embeddings with Anchor Path Encoding

no code implementations • 9 May 2021 • Yuheng Lu, Jinpeng Chen, Chuxiong Sun, Jie Hu

We show that GIRs get outperformed results in position-aware scenarios, and performances on typical GNNs could be improved by fusing GIR embeddings.

ISTR: End-to-End Instance Segmentation with Transformers

1 code implementation • 3 May 2021 • Jie Hu, Liujuan Cao, Yao Lu, Shengchuan Zhang, Yan Wang, Ke Li, Feiyue Huang, Ling Shao, Rongrong Ji

However, such an upgrade is not applicable to instance segmentation, due to its significantly higher output dimensions compared to object detection.

Ranked #21 on

Instance Segmentation

on COCO test-dev

Ranked #21 on

Instance Segmentation

on COCO test-dev

Scalable and Adaptive Graph Neural Networks with Self-Label-Enhanced training

1 code implementation • 19 Apr 2021 • Chuxiong Sun, Hongming Gu, Jie Hu

To further improve scalable models on semi-supervised learning tasks, we propose Self-Label-Enhance (SLE) framework combining self-training approach and label propagation in depth.

Ranked #4 on

Node Property Prediction

on ogbn-papers100M

Ranked #4 on

Node Property Prediction

on ogbn-papers100M

Learning the Superpixel in a Non-iterative and Lifelong Manner

1 code implementation • CVPR 2021 • Lei Zhu, Qi She, Bin Zhang, Yanye Lu, Zhilin Lu, Duo Li, Jie Hu

Superpixel is generated by automatically clustering pixels in an image into hundreds of compact partitions, which is widely used to perceive the object contours for its excellent contour adherence.

Involution: Inverting the Inherence of Convolution for Visual Recognition

13 code implementations • CVPR 2021 • Duo Li, Jie Hu, Changhu Wang, Xiangtai Li, Qi She, Lei Zhu, Tong Zhang, Qifeng Chen

Convolution has been the core ingredient of modern neural networks, triggering the surge of deep learning in vision.

Ranked #705 on

Image Classification

on ImageNet

Ranked #705 on

Image Classification

on ImageNet

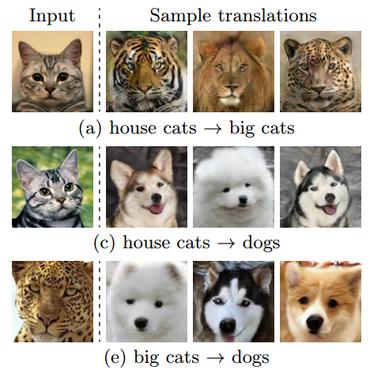

Image-to-image Translation via Hierarchical Style Disentanglement

1 code implementation • CVPR 2021 • Xinyang Li, Shengchuan Zhang, Jie Hu, Liujuan Cao, Xiaopeng Hong, Xudong Mao, Feiyue Huang, Yongjian Wu, Rongrong Ji

Recently, image-to-image translation has made significant progress in achieving both multi-label (\ie, translation conditioned on different labels) and multi-style (\ie, generation with diverse styles) tasks.

Disentanglement

Disentanglement

Multimodal Unsupervised Image-To-Image Translation

+1

Multimodal Unsupervised Image-To-Image Translation

+1

Adaptive Graph Diffusion Networks

1 code implementation • 30 Dec 2020 • Chuxiong Sun, Jie Hu, Hongming Gu, Jinpeng Chen, MingChuan Yang

Until the date of submission (Aug 26, 2022), AGDNs achieve top-1 performance on the ogbn-arxiv, ogbn-proteins and ogbl-ddi datasets and top-3 performance on the ogbl-citation2 dataset.

Ranked #1 on

Link Property Prediction

on ogbl-citation2

Ranked #1 on

Link Property Prediction

on ogbl-citation2

A Survey on Machine Reading Comprehension: Tasks, Evaluation Metrics and Benchmark Datasets

no code implementations • 21 Jun 2020 • Changchang Zeng, Shaobo Li, Qin Li, Jie Hu, Jianjun Hu

Machine Reading Comprehension (MRC) is a challenging Natural Language Processing(NLP) research field with wide real-world applications.

Robust Motion Averaging under Maximum Correntropy Criterion

no code implementations • 21 Apr 2020 • Jihua Zhu, Jie Hu, Huimin Lu, Badong Chen, Zhongyu Li

Recently, the motion averaging method has been introduced as an effective means to solve the multi-view registration problem.

Architecture Disentanglement for Deep Neural Networks

1 code implementation • ICCV 2021 • Jie Hu, Liujuan Cao, Qixiang Ye, Tong Tong, Shengchuan Zhang, Ke Li, Feiyue Huang, Rongrong Ji, Ling Shao

Based on the experimental results, we present three new findings that provide fresh insights into the inner logic of DNNs.

A Convolutional Neural Network-based Patent Image Retrieval Method for Design Ideation

no code implementations • 10 Mar 2020 • Shuo Jiang, Jianxi Luo, Guillermo Ruiz Pava, Jie Hu, Christopher L. Magee

This approach is also illustrated in a case study of robot arm design retrieval.

Interpretable Machine Learning Model for Early Prediction of Mortality in Elderly Patients with Multiple Organ Dysfunction Syndrome (MODS): a Multicenter Retrospective Study and Cross Validation

no code implementations • 28 Jan 2020 • Xiaoli Liu, Pan Hu, Zhi Mao, Po-Chih Kuo, Peiyao Li, Chao Liu, Jie Hu, Deyu Li, Desen Cao, Roger G. Mark, Leo Anthony Celi, Zhengbo Zhang, Feihu Zhou

This study aims to develop an interpretable and generalizable model for early mortality prediction in elderly patients with MODS.

Information Competing Process for Learning Diversified Representations

1 code implementation • NeurIPS 2019 • Jie Hu, Rongrong Ji, Shengchuan Zhang, Xiaoshuai Sun, Qixiang Ye, Chia-Wen Lin, Qi Tian

Learning representations with diversified information remains as an open problem.

Attribute Guided Unpaired Image-to-Image Translation with Semi-supervised Learning

1 code implementation • 29 Apr 2019 • Xinyang Li, Jie Hu, Shengchuan Zhang, Xiaopeng Hong, Qixiang Ye, Chenglin Wu, Rongrong Ji

Especially, AGUIT benefits from two-fold: (1) It adopts a novel semi-supervised learning process by translating attributes of labeled data to unlabeled data, and then reconstructing the unlabeled data by a cycle consistency operation.

FluidC+: A novel community detection algorithm based on fluid propagation

no code implementations • International Journal of Modern Physics C 2019 • Jinfang Sheng, Kai Wang, Zejun Sun, Jie Hu, Bin Wang and Aman Ullah

In recent years, community detection has gradually become a hot topic in the complex network data mining field.

Combining RGB and Points to Predict Grasping Region for Robotic Bin-Picking

no code implementations • 16 Apr 2019 • Quanquan Shao, Jie Hu

This paper focuses on a robotic picking tasks in cluttered scenario.

Suction Grasp Region Prediction using Self-supervised Learning for Object Picking in Dense Clutter

no code implementations • 16 Apr 2019 • Quanquan Shao, Jie Hu, Weiming Wang, Yi Fang, Wenhai Liu, Jin Qi, Jin Ma

This paper focuses on robotic picking tasks in cluttered scenario.

Towards Visual Feature Translation

1 code implementation • CVPR 2019 • Jie Hu, Rongrong Ji, Hong Liu, Shengchuan Zhang, Cheng Deng, Qi Tian

In this paper, we make the first attempt towards visual feature translation to break through the barrier of using features across different visual search systems.

Gather-Excite: Exploiting Feature Context in Convolutional Neural Networks

9 code implementations • NeurIPS 2018 • Jie Hu, Li Shen, Samuel Albanie, Gang Sun, Andrea Vedaldi

We also propose a parametric gather-excite operator pair which yields further performance gains, relate it to the recently-introduced Squeeze-and-Excitation Networks, and analyse the effects of these changes to the CNN feature activation statistics.

An In-field Automatic Wheat Disease Diagnosis System

no code implementations • 26 Sep 2017 • Jiang Lu, Jie Hu, Guannan Zhao, Fenghua Mei, Chang-Shui Zhang

Crop diseases are responsible for the major production reduction and economic losses in agricultural industry world- wide.

Squeeze-and-Excitation Networks

82 code implementations • CVPR 2018 • Jie Hu, Li Shen, Samuel Albanie, Gang Sun, Enhua Wu

Squeeze-and-Excitation Networks formed the foundation of our ILSVRC 2017 classification submission which won first place and reduced the top-5 error to 2. 251%, surpassing the winning entry of 2016 by a relative improvement of ~25%.

Ranked #59 on

Image Classification

on CIFAR-10

Ranked #59 on

Image Classification

on CIFAR-10

A DNN Framework For Text Image Rectification From Planar Transformations

no code implementations • 14 Nov 2016 • Chengzhe Yan, Jie Hu, Chang-Shui Zhang

In this paper, a novel neural network architecture is proposed attempting to rectify text images with mild assumptions.

A Key Volume Mining Deep Framework for Action Recognition

no code implementations • CVPR 2016 • Wangjiang Zhu, Jie Hu, Gang Sun, Xudong Cao, Yu Qiao

Training with a large proportion of irrelevant volumes will hurt performance.